Quick Regular Expression to clean off any tracking codes on URLS

I have to deal with Scrapers all day long in my day job and I ban them in a multitude of ways from using firewalls, .htaccess rules, my own personal logger system that checks for the duration between page loads, behaviour, and many other techniques.

However I also have to

scrape HTML content sometimes for various reasons, such as to find a piece of content related to somebody on another website linked to my own. So I know both methods to use to detect scrapers and stop them.

This is a guide to various methods that scrapers use to prevent being caught and have their IP address added to a blacklist within minutes of starting. Knowing the methods people use to scrape sites will help you when you have to defend your own from scrapers so it's good to know both attack and defense.

Sometimes it is just too easy to spot a script kiddy who has just discovered CURL and thinks it's a good idea to test it out on your site by crawling every single page and link available.

Usually this is because they have downloaded a script from the net, sometimes a very old one, and not bothered to change any of the parameters. Therefore when you see a user-agent in you logfile that is hammering you that just has the user-agent of "CURL" you can block it and know you will be blocking many other script kiddies as well.

I believe that when you are scraping HTML content from a site it always wise to follow some golden rules based on Karma. It is not nice to have your own site hacked or taken down due to a BOT gone wild therefore you shouldn't wish this on other people either.

Behave when you are doing your own scraping and hopefully you won't find your own sites content appearing on a Chinese rip off under a different URL anytime soon.

1. Don't overload the server your are scraping.

This only lets the site admin know they are being scraped as your IP / Useragent will appear in their log files so regularly that you might get confused for trying a DOS attack. You could find yourself added to a block list ASAP if you hammer the site you are scraping.

The best way to get round this is to put a time gap in-between each request you make. If possible

follow the sites Robots.txt file if they have one and use any

Crawl-Delay parameter they may have specified. This will make you look much more legitimate as you are obeying their rules.

If they don't have a Crawl-Delay value then

randomise a wait time in-between HTTP requests, with at least a few seconds wait as the minimum. If you don't hammer their server and slow it down you won't draw attention to yourself.

Also if possible

try to always obey the sites Robot.txt file as if you do you will find yourself on the right side of the Karmic law. There are many tricks people use such as

dynamic Robots.txt files, and fake URL's placed within them, that are used to trick scrapers who break the rules by following DISALLOWED locations into honeypots, never-ending link mazes or just instant blocks.

An example of

a simple C# Robots.txt parser I wrote many years ago that can easily be edited to obtain the Crawl-Delay parameter can be found here:

Parsing the Robots.txt file with C-Sharp.

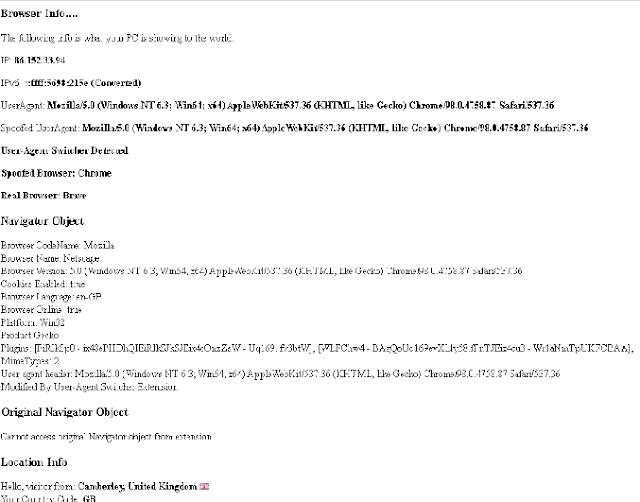

2. Change your user-agent in-between calls.

Many offices share the same IP across their network due to the outbound gateway server they use, also

many ISP's use the same IP address for multiple home users e.g DHCP. Therefore there is no easy way until

IPv6 is 100% rolled out to guarantee that by

banning a user by their IP address alone you will get your target.

Changing your user-agent in-between calls and using a number of random and current user-agents will make this even harder to detect.

Personally I block all access to my sites that use a list of BOTS I know are bad or where it is obvious the person has not edited the user-agent (CURL, Snoopy, WGet etc), plus IE 5, 5.5, 6 (all the way up to 10 if you want).

I have found one of the most common user-agents used by scrapers is IE 6. Whether this is because the person using the script has downloaded an old tool with this as the default user-agent and not bothered to change it or whether it is due to the high number of Intranet sites that were built in IE6 (and use VBScript as their client side language) I don't know.

I just know that by banning IE 6 and below you can stop a LOT of traffic. Therefore

never use old IE browser UA's and always change the default UA from CURL to something else such as Chromes latest user-agent.

Using random numbers, dashes, very short user-agents or defaults is a way to get yourself caught out very quickly.

3. Use proxies if you can.

There are basically two types of proxy.

The proxy where the owner of the computer knows it is being used as a proxy server, either generously to allow people in foreign countries such as China or Iran to access outside content or for malicious reasons to capture the requests and details for hacking purposes.

Many

legitimate online proxy services such as

"Web Proxies" only allow GET requests, float adverts in front of you and prevent you from loading up certain material such as videos, JavaScript loaded content or other media.

A decent proxy is one where you obtain the IP address and port number and then set them up in your browser or BOT to route traffic through. You can find many

free lists of proxies and their port numbers online although as they are free you will often find speed is an issue as many people are trying to use them at the same time. A good site to use to

obtain proxies by country is

http://nntime.com.

Common proxy port numbers are

8000, 8008, 8888, 8080, 3128. When using P2P tools such as uTorrent to download movies it is always good to disguise your traffic as HTTP traffic rather than using the default setting of a random port on each request. It makes it harder but obviously not impossible for snoopers to see you are downloading bit torrents and other content.

You can find a list of ports and their common uses here.

The other form of proxy are BOTNET's or computers where PORTS have been left open and people have reversed engineered it so that they can use the computer/server as a proxy without the persons knowledge.

I have also found that many people who try

hacking or spamming my own sites are also using insecure servers.

A port scan on these people often reveals that their own server can be used as a proxy themselves. If they are going to hammer me - then sod them I say as I watch US TV live on their server.

4. Use a rented VPS

If you are only required to scrape for a day or two then you can hire a VPS and set it up so that you have a safe non-blacklisted IP address to crawl from. With services like AmazonAWS and other rent by the minute servers it is easy to move your BOT from server to server if you need to do some heavy duty crawling.

However on the flipside I often find myself banning the

AmazonAWS IP range (

which you can obtain here) as I know it is so often used by scrapers and social media BOTS (bandwidth wasters).

5. Confuse the server by adding extra headers

There are many headers that can tell a server whether you are coming through a proxy such as

X-FORWARDED-FOR, and there is standard code used by developers to work backwards to obtain the correct original IP address (

REMOTE_ADDR) which can allow them to locate you through a Geo-IP lookup.

However not so long ago, and many sites still may use this code, it was very easy to trick sites in one country into believing you were from that country by modifying the

X-FORWARDED-FOR header and supplying an IP from the country of your choice.

I remember it was very simple to watch Comedy Central and other US TV shown online just by simply using

a FireFox Modify Headers plugin and entering in a US IP address for the

X-FORWARDED-FOR header.

Due to the code they were using, they obviously thought that the presence of the header indicated that a proxy had been used and that the original country of origin was the spoofed IP address in this modified header rather than the value in

REMOTE_ADDR header

.

Whilst this code is not so common anymore it can still be a good idea to "confuse" servers by

supplying multiple IP addresses in headers that can be modified to make it look like a more legitimate request.

As the actual

REMOTE_ADDR header is set by the outbound server you cannot easily change it. However you can supply a comma delimited list of IP's from various locations in headers such as

X-FORWARDED-FOR, HTTP_X_FORWARDED, HTTP_VIA and the many others that proxies, gateways, and different servers use when passing HTTP requests along the way.

Plus you never know, if you are trying to obtain content that is blocked from your country of origin then this old technique may still work. It all depends on the code they use to identify the country of an HTTP requests origin.

6. Follow unconventional redirect methods.

Remember there are many levels of being able to block a scrape so making it look like a real request is the ideal way of getting your content. Some sites will use intermediary pages that have a

META Refresh of "0" that then redirect to the real page or

use JavaScript to do the redirect such as:

<body onload="window.location.href='http://blah.com'">

or

<script>

function redirect(){

document.location.href='http://blah.com';

}

setTimeout(redirect,50);

</script>

Therefore you want a good super scraper tool that can handle this kind of redirect so you don't just return adverts and blank pages. Practice those regular expressions!

7. Act human.

By only making one

GET request to the main page and not to any of the images, CSS or JavaScript files that the page loads in you make yourself look like a BOT.

If you look through a log file

it is easy to spot Crawlers and BOTs because they don't obtain these extra files and as a log file is mainly sequential you can easily spot the requests made by one IP or User-Agent just by scanning down the file and noticing all

the single GET requests from that IP to different URLS.

If you really want to mask yourself as human then

use a regular expression or HTML parser to get all the related content as well.

Look for any URLS within

SRC and HREF attributes as well as URLS contained within JavaScript that are loaded up with AJAX. It may slow your own code down plus use up more of your own bandwidth as well as the server you are scraping but it will disguise you much better and make it harder for anyone looking at a log file to distinguish you from a BOT with a simple search.

8. Remove tracking codes from your URL's.

This is so that when

the SEO "guru" looks at their stats they don't confuse their tiny little minds by not being able to work out why it says 10 referrals from Twitter but only 8 had JavaScript enabled or had the tracking code they were using for a feed. This makes it look like a direct, natural request to the page rather than a redirect from an RSS or XML feed.

Here is

an example of a regular expression that removes anything after the query-string including the question mark.

The example uses PHP but the expression itself can be used in any language.

$url = "http://www.somesite.com/myrewrittenpage?utm_source=rss&utm_medium=rss&utm_campaign=mycampaign";

$url = preg_replace("@(^.+)(\?.+$)@","$1",$url);

There are many more rules to scraping without being caught but the main aspect to remember is Karma.

What goes around comes around, therefore if you scrape a site heavily and hammer it so bad that it costs the user so much bandwidth and money that they cannot afford it, do not be surprised if someone comes and does the same to you at some point!